The glitch from the east exposed hidden dependencies on US-EAST-1 that exist in many architectures – no matter where they think workloads are running.

The incident

On October 20 2025, AWS experienced one of the largest cloud infrastructure failures in its history.

A DNS resolution issue in the US-EAST-1 (Northern Virginia) region cascaded through AWS’s global control plane, triggering a 16-hour disruption that rippled across the

internet. The headlines:

- More than 2,000 companies were affected

- Over 8 million outage reports were logged globally

- Services across financial platforms, airlines, government systems & major consumer apps were impacted.

Even organisations with mature disaster recovery and multi-region architectures experienced service degradation or complete failure. This wasn’t just a technical glitch – it was an architectural exposure.

Half the internet on pause

The scale of disruption was unprecedented.

Snapchat’s 375 million daily users couldn’t send messages. Roblox gamers were locked out of their worlds for hours. Financial platforms such as Coinbase, Robinhood, and Venmo froze transactions, while UK government services like HMRC and major banks including Lloyds and Halifax went dark. Airlines, media outlets, and even Amazon’s own Alexa and retail systems faltered.

This wasn’t a simple application-level failure. A single infrastructure fault rippled outward, simultaneously degrading hundreds of independent services.

For nearly a day, what failed wasn’t just AWS – it was the assumption of seamless digital continuity.

How a single fault became a global failure

The incident began at 3:11AM ET when a DNS resolution failure affecting DynamoDB API endpoints in US-EAST-1 caused cascading errors in AWS’s control plane – the critical management layer that handles provisioning, authentication and service coordination across the platform.

Although DynamoDB was the initial trigger, the real problem was where that failure occurred.

US-EAST-1 isn’t just another region: it’s where global control plane infrastructure is anchored for key services, including:

- IAM – authentication and access control

- Route 53 – DNS provisioning and record management

- CloudFront – CDN configuration and distribution control

This created a global choke point. While workloads in other regions (e.g. Singapore, London, Sydney) were healthy at the data plane level, control plane operations couldn’t execute.

That meant:

- No new load balancers could be created

- IAM role updates and new policy attachments failed

- S3 replication and configuration changes stalled

- DNS-based failovers didn’t resolve properly

Control plane vs. data plane: why multi-region failed

The data plane – which serves live traffic and interacts with already-provisioned resources – held up for many workloads.

Running EC2 instances stayed online, existing S3 data remained accessible via GET operations, and previously created endpoints continued to function.

But the control plane – responsible for creating, modifying, and authenticating infrastructure – was effectively offline.

Many organisations discovered that their disaster recovery (DR) plans depended on spinning up new infrastructure in secondary regions, which required control plane activity. Without it, failover automation silently failed.

This is why multi-region deployments alone were insufficient.

It wasn’t a lack of redundancy – it was a lack of independence from a single region’s

control plane.

The illusion of high availability

Many teams assume they have high availability (HA) simply because they run in multiple regions or availability zones. But redundancy ≠ resilience.

HA is not a static setting – it’s a capability that only proves itself under failure conditions.

Typical weak points include:

- DNS or routing dependencies tied to a single control point

- Automation that implicitly relies on US-EAST-1 services

- Slow or manual failover steps during cascading outages

- Monitoring tools hosted in the same failing region

During this event, availability was binary. Systems either continued serving traffic – or they didn’t.

What real resilience looks like

The outage made clear that multi-region is not enough.

True HA requires architectures designed to operate independently of the control plane

during failover.

Key design principles include:

- Pre-provisioning critical infrastructure in secondary regions

- Relying on data plane operations only for failover

- Hosting monitoring and observability externally or in separate providers

- Avoiding implicit dependencies on US-EAST-1 in IAM, DNS and routing layers

- Using chaos engineering to simulate control plane failure, not just zone failure

A number of major enterprises discovered that their DR scripts – which looked solid on paper – couldn’t run without a functioning control plane.

This isn’t an edge case; it’s an architectural pattern that needs to change.

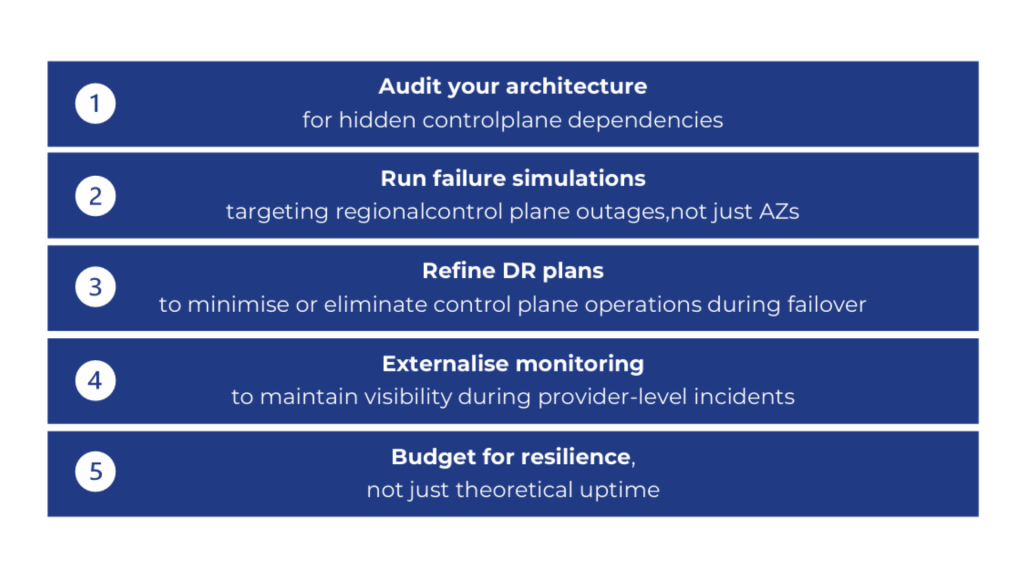

What you can do next

The organisations that engineer for failure as a baseline assumption, rather than an anomaly, will sustain availability when dependencies fail.

Key takeaway

High availability isn’t something you configure once. It’s something you prove under real failure conditions.

If your HA strategy assumes US-EAST-1 or any single region will always be available, you don’t have resilience; you have an architectural dependency waiting to surface.

The October 20 outage didn’t just take down half the internet – it exposed the fragile backbone of cloud architectures built on assumed permanence.

The next event may not test AWS. It may test you.

Looking ahead

Incidents like this test cloud providers and the real-world resilience of the systems built on top of them, alike.

Over the coming weeks, we’ll be taking a closer look at the resiliency pillar of our customers’ architectures, examining how they perform under similar control plane failure scenarios.

We’ll be sharing our findings and recommendations directly with you to help ensure your architecture can withstand future large-scale outages.