In 2024, the buzzwords and hot topics of the early 2020s are becoming standard practice. So, if you’re still in the dark about serverless, now’s the time to swot up.

This article will provide you with everything you need to get a firm grasp on serverless computing, including:

- What serverless computing is

- The technology trends that drove the development of serverless architectures

- The pros and cons of serverless applications

- What solutions are available today

- And finally, what makes a good serverless computing use case

So let’s get to it!

What is serverless?

Serverless computing is – first and foremost – a misleading term.

In solutions like peer-to-peer, it’s true there’s not a server in sight, but, in serverless solutions, there are in fact, servers galore.

So why ‘serverless’?

The quickest way to communicate this concept is to think about the difference between owning a car and getting an Uber.

In the first instance, your car’s there for you when you need it, but you also pay insurance, maintenance costs and fuel.

In the case of an Uber, you only pay for that journey. For the time you’re not using that Uber, to you, it may as well not exist, which is exactly the case in serverless computing.

Scaling to zero

Serverless services like AWS Lambda and Azure Functions are essentially the Ubers of virtual computing.

You input your code and business logic (a set of instructions, like a ride plan) and then, when an event triggers, a container or lightweight execution environment is spun up to get the job done.

When there are no events, the serverless service scales down to zero.

It’s here when you need it; gone when you don’t; no maintenance; no upkeep.

And you don’t even get a passenger rating.

How did we get here? Skip this if you don’t feel like a history lesson

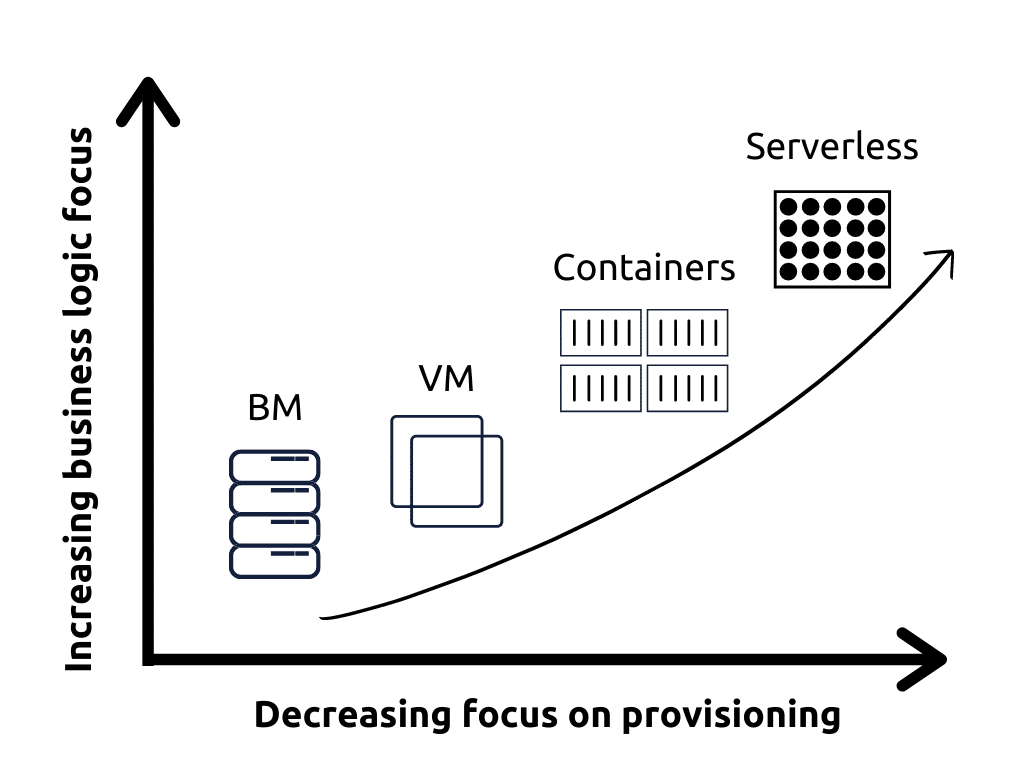

Serverless computing represents the latest iteration in a technology trend of the confluence between application and architecture. Essentially, cloud services are growing more and more a part of the applications they run, the line blurring with every new service.

As they do so, they’re also becoming more and more ephemeral, meaning there’s less and less to think about in the provisioning space.

1. BMs (bare metal servers)

Here, managing servers was literal. A warts-and-all, bare metal, plain old server. Certainly not ephemeral. A lot to think about in terms of patching, maintenance, cooling, insuring and everything that goes with owning business-critical hardware.

2. VMs (virtual machines)

Public cloud providers introduced the elementary particles of the cloud computing universe.

By partitioning off sections of physical servers to create virtual machines (a process called virtualisation), a public cloud provider like AWS or Azure could supply a rapidly scalable, flexible and cheaper machine. To add more machines, all they needed to do was allocate more (virtual) space.

However, even though they were virtual, server management (security, set-up) was still a big part of the job.

3. Containers

While VMs were designed as virtualised BMs, containers are cloud-native machines.

In essence, they share their host’s OS, making them much lighter and easier to scale because there’s much less of them.

Containers still need to be managed and maintained, but they are significantly more ephemeral.

4. Serverless

Serverless computing, as already discussed, goes one better. Serverless architectures are event-driven, composed of containers or other lightweight elements responding in real-time to serverless functions.

However, while you might describe something as a serverless architecture, that doesn’t mean serverless applications are completely ephemeral in the cloud space, existing purely as code when no one’s looking.

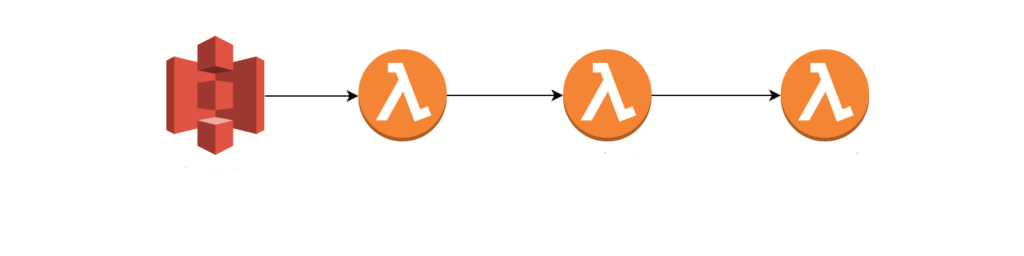

An example of a serverless architecture using AWS Lambda – going deeper

In the above, you can see static elements of the app are hosted in S3. That isn’t serverless.

However, when handling services requiring app data, API keys or identities, Lambda will spin up machines to do the job, and then scale them back down to zero. Cognito, STS and Dynamo are managed services which will interact with those serverless machines spun up by Lambda.

In essence, serverless applications can be more or less serverless depending on how they’re architected.

What are the pros and cons of serverless?

Pros

1. Reduced costs

Serverless consumes no resources at rest, scales fast and takes away server management. Using the Uber analogy, it’s easy to understand why serverless is cheaper. This is one of its main draws.

2. Frees up developer creativity

Concentrating only on business logic opens doors for creative development, with teams unfettered by worries about environment and provisioning. However, this speaks to the degree to which you adopt a serverless platform – if it’s not integral to your design, you may not capitalise on the freedom offered.

3. Decreased time to market

If embraced at the beginning, a serverless framework can lead to a shorter time to market. Less time spent managing the underlying infrastructure. Rapid deployments. Low costs. Well-integrated API ecosystems.

4. Reduced latency

Because servers are spun up on demand, the provider can run the function in an environment close to the user’s request. Some services integrate with edge computing.

5. Cloud-native

Serverless computing is part of the whole tapestry of cloud-native design. Essentially, it’s going to gel well with the developing architecture landscape.

Cons

1. Multi-tenancy security issues

Because serverless apps often perform function requests for multiple clients within the same server, serverless solutions can be more vulnerable to certain kinds of attacks.

2. Cold starts

The first time your function calls a machine, there can be increased latency. This is known as a cold start but it can be mitigated by having your application make routine calls to keep your environment warm.

3. Not the best approach for long-running tasks

Certain procedures – for example, processing data – are better suited to dedicated servers. These are tasks that need to be ticking away in the background.

4. Lack of operational oversight

Because serverless solutions spin up a new environment every time a function’s called, debugging, gathering relevant metrics, and monitoring can be difficult – and you’re more often than not reliant on the provider.

5. Architectural complexity

Implementing a serverless architecture can be difficult, and a great deal of thought needs to go into how the application is split into different functions – although, of course, you do avoid the complexity of provisioning.

Serverless on AWS, Azure or Google?

Serverless and FaaS solutions are offered by all major cloud providers. There’s Lambda from AWS, Azure Functions and Google Cloud Functions, to name a few.

However, with serverless now a mature technology, the real difference between these services is slight.

All are highly available with the capability to support huge workloads. You could say Google supports the fewest number of coding languages – with languages supported being cited as a meaningful distinction by some – but really, most serverless concepts are implementable in most of the languages offered.

It’s often just a case of developer preference.

Interface-wise, some point to AWS Lambda’s more hold-you-by-the-hand, pop-up–box approach as a positive, making it less likely unseasoned devs will drop the ball; some prefer the more stripped-back interface of Azure.

The bottom line is that these technologies are roughly equal for most purposes, which again speaks to the fact that they’ve been developing for some years.

How to know whether to go serverless?

Serverless has many potential use cases. It can be great for:

- Start-ups or smaller companies that want to focus on their application only

- Static websites, where the speed and scalability of serverless outstrips VMs

- As part of a DevOps pipeline, where serverless solutions manage the environments needed for testing

- Any workload that doesn’t need to be ‘always on,’ to take maximum advantage of the fact you’re paying nothing while idle

However, there’s more to it than that.

Ultimately, whether or not serverless is the right architecture for you is a very complex question, and though we’d be happy to weigh in, it’s a topic for another day.

It all depends on your application, the overall business, and a whole lot more.

The takeaways

- Serverless isn’t serverless. There are servers, but they’re ephemeral

- Serverless is part of a trend in which provisioning is backgrounded, and businesses focus on code

- There are pros and cons to serverless, including, in the plus column, low costs and availability, and in the minus, vendor lock-in and loss of fine control

- Whether or not to embrace serverless is a complex question – it’s great in some circumstances, but not all; plus, it’s a matter of degree

As a next-gen MSP working with MACH technologies for leading brands, Just After Midnight is perfectly placed to help you take advantage of cloud-native tech. We work with serverless and non-serverless cloud environments providing support, cost optimisation and brand new builds.

So, if you think serverless might be the way to go, or you just want pointing in the right direction, get in touch.